It is possible to install Spark on a standalone machine. Whilst you won’t get the benefits of parallel processing associated with running Spark on a cluster, installing it on a standalone machine does provide a nice testing environment to test new code.

Sep 14, 2015 winutils. Windows binaries for Hadoop versions. These are built directly from the same git commit used to create the official ASF releases; they are checked out and built on a windows VM which is dedicated purely to testing Hadoop/YARN apps on Windows.

This blog explains how to install Spark on a standalone Windows 10 machine. The blog uses Jupyter Notebooks installed through Anaconda, to provide an IDE for Python development. Other IDEs for Python development are available. Installing Spark consists of the following stages:

- Install Java jdk

- Install Scala

- Download Winutils

- Install Anaconda – as an IDE for Python development

- Install Spark

Installing Spark requires adding a number of environment variables so there is a small section at the beginning explaining how to add an environment variable

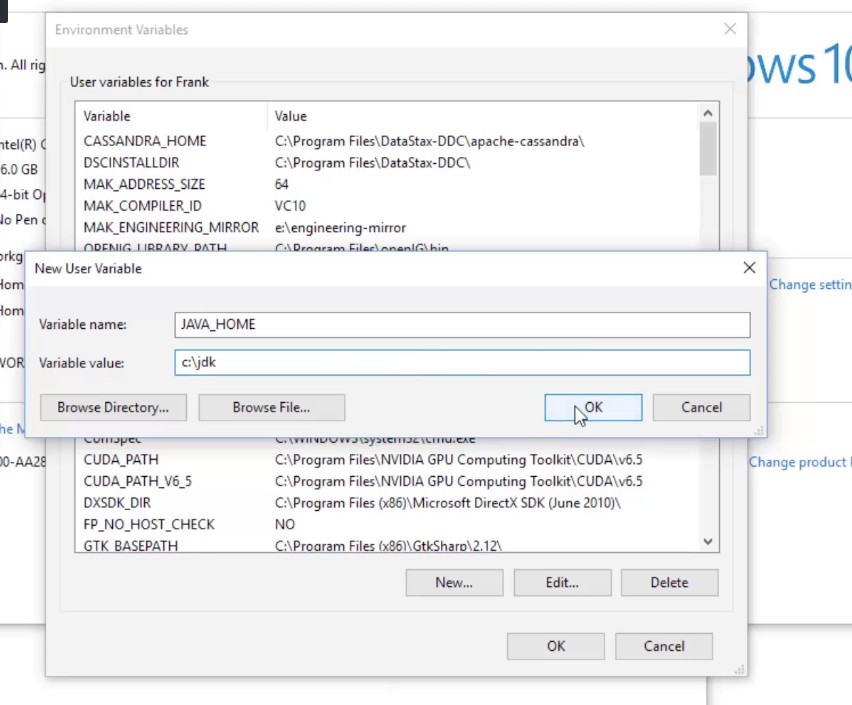

To install Spark you need to add a number of environment variables. The following shows how to create an environment variable in Windows 10:

Right click on the Start button and choose Control Panel:

In Control Panel, click on System and Security:

In the next pane, click on System:

In the system pane, click on Advanced system settings:

In system Properties, click on Environment Variables…

Make sure you have the latest version of Java installed; if you do, you should have the latest version of the Java jdk. If not, download the jdk from the following link:

Once downloaded, copy the jdk folder to C:Program filesJava:

Create an environment variable called JAVA_HOME and set the value to the folder with the jdk in it (e.g. C:Program FilesJavajdk1.8.0_121):

Install Scala from this location:

Download the msi and run it on the machine.

Create an environment variable called SCALA_HOME and point it to the directory where Scala has been installed:

Download the winutils.exe binary from this location:

Create the following folder and save winutils.exe to it:

c:hadoopbin

Create an environment variable called HADOOP_HOME and give it the value C:hadoop:

Edit the PATH environment variable to include %HADOOP_HOME%bin:

Create a folder called hive in the following location:

C:tmphive

Run the following line in Command Prompt to put permissions onto the hive directory:

You can check permissions with the following command:

At the moment (February 2017), Spark does not work with Python 3.6. Download a version of Anaconda that uses Python 3.5 or less from the following link (I downloaded Anaconda3-4.2.0):

If possible, make sure Anaconda is saved to the following folder:

C:Program FilesAnaconda3

Add an environment variable called PYTHONPATH and give it the value of where Anaconda is saved to:

Download the latest version of Spark from the following link. Make sure the package type says pre-built for Hadoop 2.7 and later.

As of writing this post (February 2017) the machine learning add-ins didn’t work in Spark 2.0. If you want to use the machine learning utilities, you need to download Spark 1.6.

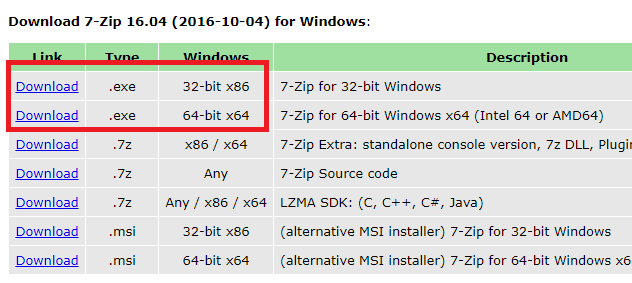

Once downloaded, change the extension of the file from tar to zip, so that the file name reads spark-2.1.0-bin-hadoop2.7.zip. Extract the file using winrar or winzip.

Within the extracted folder is a file without an extension. Add a zip extension to the file and extract again.

Create a folder at C:Spark and copy the contents of the second extracted folder to it.

Go to the folder C:Sparkconf and open the file log4j.properties.template using WordPad.

Find the log4j.rootCategory setting and change it from INFO to WARN.

Save the changes to the file.

Remove the template extension from the file name so that the file name becomes log4j.properties

Add an environment variable called SPARK_HOME and give it the value of the folder where Spark was downloaded to (C:Spark):

Add to the PATH Environment variable the following value:

%SPARK_HOME%bin

Add the following variables if you want the default program that opens with Spark to be Jupyter Notebooks.

Add the environment variable PYSPARK_DRIVER_PYTHON and give it the value jupyter.

Add the environment variable PYSPARK_DRIVER_PYTHON_OPTS and give it the value notebook.

To use Spark, open command prompt, then type in the following two commands:

Jupyter notebooks should open up.

Once Jupyter Notebooks has opened, type the following code (the notice file should be part of the Spark download):

Hopefully, you should now have a version of Spark running locally on your machine.

Hadoop is released as source code tarballs with corresponding binary tarballs for convenience. The downloads are distributed via mirror sites and should be checked for tampering using GPG or SHA-512.

| Version | Release date | Source download | Binary download | Release notes |

|---|---|---|---|---|

| 2.10.0 | 2019 Oct 29 | source (checksumsignature) | binary (checksumsignature) | Announcement |

| 3.1.3 | 2019 Oct 21 | source (checksumsignature) | binary (checksumsignature) | Announcement |

| 3.2.1 | 2019 Sep 22 | source (checksumsignature) | binary (checksumsignature) | Announcement |

| 3.1.2 | 2019 Feb 6 | source (checksumsignature) | binary (checksumsignature) | Announcement |

| 2.9.2 | 2018 Nov 19 | source (checksumsignature) | binary (checksumsignature) | Announcement |

To verify Hadoop releases using GPG:

- Download the release hadoop-X.Y.Z-src.tar.gz from a mirrorsite.

- Download the signature file hadoop-X.Y.Z-src.tar.gz.asc fromApache.

- Download the HadoopKEYS file.

- gpg –import KEYS

- gpg –verify hadoop-X.Y.Z-src.tar.gz.asc

To perform a quick check using SHA-512:

- Download the release hadoop-X.Y.Z-src.tar.gz from a mirrorsite.

- Download the checksum hadoop-X.Y.Z-src.tar.gz.sha512 or hadoop-X.Y.Z-src.tar.gz.mds fromApache.

- shasum -a 512 hadoop-X.Y.Z-src.tar.gz

All previous releases of Hadoop are available from the Apache releasearchive site.

Many third parties distribute products that include Apache Hadoop andrelated tools. Some of these are listed on the Distributions wikipage.

License

The software licensed under Apache License 2.0